Well, we’ve seen what Adobe is bringing to 3D and UX design, but Adobe Research has developed some other interesting apps, highlighting them at the 2016 Adobe Max conference. They cover the gamut, from 2D and 3D to voice and VR, leaving us with something to ponder on the future of 3D product design.

Bear in mind, Adobe has yet to announce any release dates for their (still in development) software apps, however they merit a rundown for the technological advancement the graphics software company is pursuing. With each of these, just imagine how it would/could/should apply to 3D modeling/design/rendering software. Let’s begin with a look at StyLit.

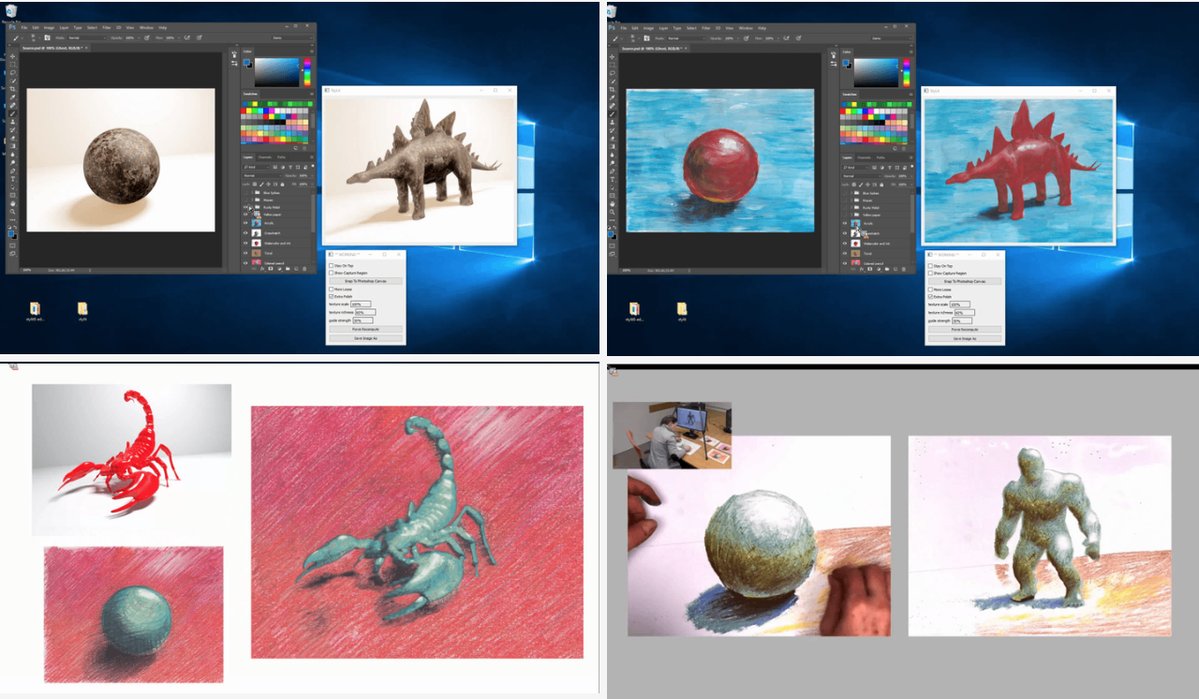

StyLit

Simply put, StyLit renders a 3D image based on what the user draws and colors in a real-time setting. Now here is the tricky part, the rendered image doesn’t necessarily need to be exactly what the user is drawing. For example, in the video Adobe’s Paul Asente grabbed an image of a dinosaur and rendered it into an artistic 3D image by drawing and coloring nothing but a ball, essentially porting his style to the digital representation. And it all happens in real-time. Amazing.

A camera situated over the drawing area captures the lines and colors with an algorithm mapping it to the 3D model. Even edits are ported to the image in a real-time setting and can be adjusted on the fly. It was published at SIGGRAPH 2016 by Jakub Fišer and team of Prague-based CTU with Adobe Research. A demo is now available at stylit.org.

VoCo

You’ve heard of putting words into someone’s mouth. This is exactly what that does. VoCo is being touted as ‘Photoshopping for Voiceovers’ and rightly so as the application allows you to edit speech using text by sampling a voice. Yes, you can recreate that sample voice to say anything you want just by typing the text into the VoCo editor, and it sounds identical to the sampled voice. Demoed at Adobe MAX by Adobe engineer Zeyu Jin, you’ll see how easy voice editing will become.

According to Adobe, it takes about 20-minutes for the software to analyze the speech audio and patterns before it can be edited with very few mistakes in the edited audio. Freakin’ scary and freakin’ cool. Could someone use my voice for trickery or something more malicious? In the video Zeyu states that there are safeguards in place and something akin to a watermark that would prevent others from doing just that. Unfortunately, there is no free demo that we can play around with just yet, but chances are we will see (hear) it at some point this year.

CloverVR

Another of Adobe’s previews is CloverVR. This app provides Adobe Premiere-style editing tools directly inside your VR video environment. The current process of editing 360° video requires the users to constantly mount and remove the VR headset–viewing, editing, viewing, editing… it’s highly inefficient. In the video, Adobe’s Stephen DiVerdi demonstrates CloverVR’s capabilities using an Oculus Rift to adjust the look-at direction for the transition between two video clips.

Using the app’s toolset, Stephen splices together two video scenes to focus on the action so that they appear relative to area you are viewing. You manipulate the editing tools in VR using the headset’s hand controllers rather than a keyboard, which seems to make the editing process easier to some degree. It also seems to provide a better perspective for editing 3D content as opposed to viewing it on a flat display. Again, there’s no word yet on when CloverVR or the other applications will be released so I will update this when it becomes available.

Adobe previewed quite a few different techologies, all approaching creative workflows a little, and often a lot, differently than usual. I recommend viewing them all. We’re hopeful we’ll see some of this sort of tech or, at least, this type of innovative thinking when it comes to our precious product development software and tools.

![6 Types of Civil Engineering Drawings [Detailed Guide]](https://www.solidsmack.com/wp-content/uploads/2023/12/Civil-Engineering-Drawings-270x180.jpeg)