It just makes sense. Take some photos of a thing, upload them, 3D print that sucka. My choice? Photos of my fist hitting my face, repeatedly. Unfortunately, motion capture and ruptured blood vessels can not be properly translated into seamless point cloud data as of yet. Nonetheless, hypr3D is putting 3D prints where your 2D prints should be and bringing you the possibility to do it all online, right now. Yeah, and we’re not talking pulling pixels off single photos either. This is 3D geometry generated from your photos or video, for free. Check it out.

hypr3D: photogrammetry of the future

hypr3D launched in August of 2010 and is run by Ash Martin and Tom Milnes. Where other photogrammetry projects, like Autodesk’s project Photofly, are allowing you to to create usable 3D data with photos taken with your camera, hypr3D is putting it online, adding video to the mix and taking away the need to download or install any additional software.

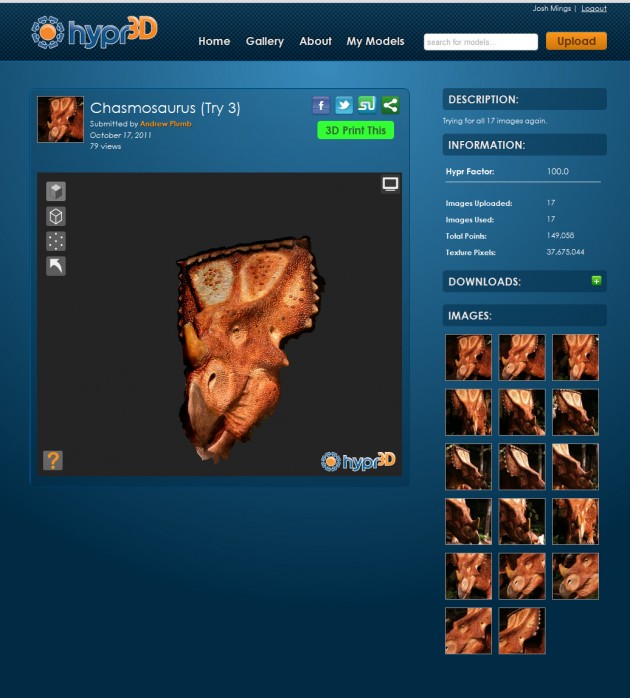

hypr3D is a web-based software service that allows anyone to create a 3D model by simply taking a series of photos or a video of an object or scene. Each model is given a unique model page with an interactive 3D viewer and all of the digital model files created are available to download for free.

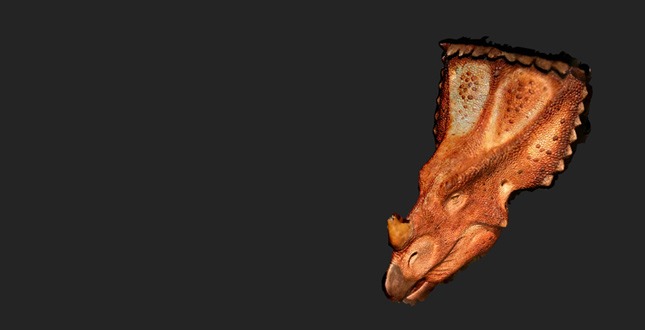

You can download the models as .STL, .DAE and .PLY and then, as you would expect, send them off to your favorite 3D printer or load it up on your own printer. Now, if this isn’t a reasone to get your own 3D printer, I don’t know what is. hypr3D is basically removing an entire layer of messing about with software and 3D scans. What they’ve got to get sorted is the photo/video upload and photo to point cloud/mesh technology. I was not able to get the photos uploaded or a mesh created, but we did speak to someone who has had some success with it, Andrew Plumb.

The hypr3D experience

Over on Google+, I asked Andrew Plumb about his experience.

More experiments are at https://www.hypr3d.com/users/4e985d62b9cc0c0001000026

They (Hypr3D) are still working out some usability issues and bugs, but they’ve been responsive and it does look promising.

Take the Starwars Landspeeder set as an example.

– The first was primarily a partial-upload issue. That seems to have been resolved, but the UI needs some tweaks to show upload status+progress. (They’re working on it.)

– The second worked using the 23 images I pre-selected.

– The third included the 23 image subset from the successful second try and hit the maximum 40-image limit, but apparently didn’t like the extra image data.

– Human-based image selection filter is still a good idea.

– It’s a tough image set to work with because the table top I put the model on has a substantial amount of glare+reflection.

– I’m not (currently) able to upload my full set of 137 images (200MB total) to see if it fairs better or worse than the results from when I tried the dataset with my3dscanner.com.

Good stuff and really, the advent of 2D data, 3D geometry and fabrication coming together online. Way to go hypr3D.

A big hat tip to Ben Eadie who has been able to successfully scan blood spatter and punches.