If you thought touchscreens were the pinnacle of intuitive interfaces, think again. Google is currently working on a project which analyzes delicate hand motions and translates them into digital data; all without ever touching a screen:

Project Soli is an interaction sensor which uses a miniature radar to track hand movements down to the sub-millimeter level. Since we use our hands for various tasks (exerting pressure, handling delicate materials, and expression to name a few), Soli tracks these movements at high speeds with the utmost accuracy. Combined with a set of universal, pre-programmed tool gestures, Soli will allow you to control different devices without ever touching them.

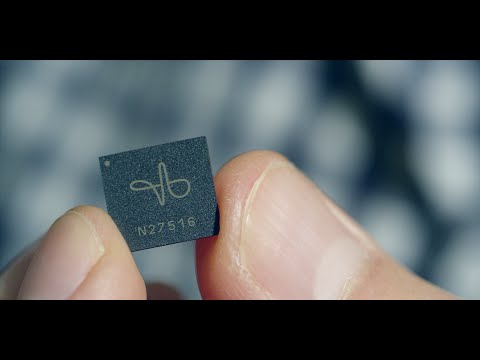

At the heart of the project is the 8mm x 10mm Soli chip, which contains the sensor and antenna array. By transmitting electromagnetic waves towards your hand, Soli can receive the gestures you make and translate them into data. Since Soli uses an advanced gesture recognition pipeline and reads the data at a high frame rate, this allows whatever device you have connected to react accordingly to your inputs.

Most of these pre-programmed, readable gestures are simple and easy to understand. Pressing your thumb and index finger together for example, turns on a button. Sliding your thumb over the side of your index finger activates a slider on a device. But most important of all, twiddling your thumb and index finger together lets you adjust the volume on your noisy roommate’s speakers. While there will no doubt be more gestures which make full use of the human hand, these first three show how accurate Soli can be at hand reading compared to a carnival gypsy.

Before Google ended up with the miniature sensor, they went through several, different-sized prototypes. The first prototype was built from pre-existing components which included multiple cooling fans, making it ginormous when compared to the final Soli sensor. After 10 months of redesigning and reimagining, they ended up rebuilding the entire radar into a chip which can be installed into even the tiniest devices. This not only saves on a lot of space, but also reduces the production cost and power consumption of the technology.

Two modulation architectures were made for the Soli chip: a Frequency Modulated Continuous Wave (FMCW) radar and a Direct-Sequence Spread Spectrum (DSSS) radar. Both of these chips have the entire radar system implemented into them, allowing them to be embedded into most phones, laptops, and mobile devices.

There is no definite release date for Project Soli but if hands-free, reactive technology is the future, then sign us up.

More details on the Soli chip can be found on the Google Advanced Technology and Projects webpage.